Worker safety has become an increasing concern in human-robot collaboration (HRC) due to potential hazards and risks introduced by robots.

Deep Reinforcement Learning (DRL) has demonstrated to be efficient in training robots to acquire complex construction skills.

However, neural network policies for collision avoidance lack theoretical safety guarantees and face challenges with out-of-distribution scenarios.

This paper proposes a biomimetic safety-constrained DRL control system, inspired by vertebrate decision-making systems.

A neural network policy serves as the "brain" for complex decision-making, while a reference governor layer functions like the spinal cord,

enabling rapid responses to environmental stimuli and prioritizing safety. Theoretical safety guarantees related to robot dynamics including torque,

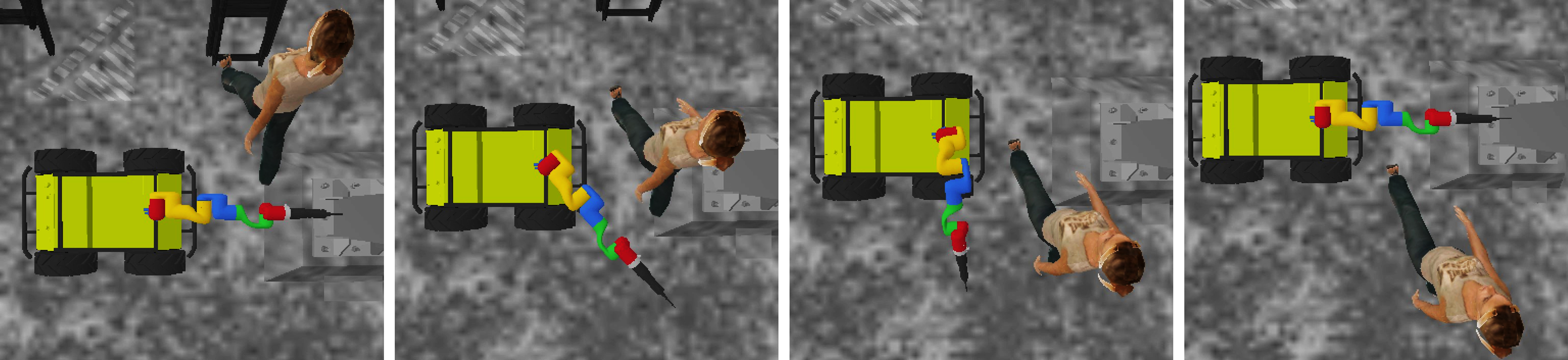

joint angle, velocity, and distance were analyzed. Experimental results demonstrate that the proposed method achieves a 0% collision rate, providing

a safe HRC mode in both static and dynamic construction scenarios.